In a companion post, I described why Heritage Guide exists — Parks Canada was about to decommission the Canadian Register of Historic Places, and I rebuilt the entire 13,554-site database as a modern bilingual web application in under 24 hours. This post covers the technical details of how that happened: the scraping pipeline, the database design, the SSR architecture, the AI-powered search system, and the Raspberry Pi deployment.

The entire application was built with Claude Code from a detailed specification document. The speed of the build was a direct consequence of this approach — describing what to build at a high level and letting Claude Code generate the implementation.

Python scraping pipeline for Parks Canada data#

The first challenge was getting the data out of historicplaces.ca before the shutdown. The ingestion pipeline is a set of Python scripts that handle extraction, transformation, and loading.

The English scraper iterates through place IDs on the Parks Canada site, fetches each page, and parses it with BeautifulSoup. For each heritage site, it extracts over 15 structured fields: name, address, geographic coordinates, formally recognized date, description, heritage value statement, character-defining elements, themes, historic and current functions, jurisdiction, recognition details, and architect/builder attributions. Both thumbnail and full-resolution images are downloaded for every site — 55,069 image files in total, a dataset that Parks Canada’s Excel exports to provinces explicitly omitted. The scraper supports resume, so if interrupted it picks up where it left off. This was essential given the volume and the time pressure.

A parallel French scraper follows the same logic against the French-language pages, mapping French section headings to the same normalized field names. It reuses the English images rather than re-downloading them, cutting the French scrape time roughly in half. The French scrape produced 13,558 records to the English scrape’s 13,554 — a small discrepancy reflecting a handful of sites that exist in one language register but not the other.

Import scripts handle the transformation into MongoDB: generating SEO-friendly slugs, parsing addresses to extract cities and provinces, transforming coordinates to GeoJSON format for geospatial indexing, and rewriting image URLs to point at S3 for production hosting. The entire pipeline — both language scrapes, imports, and image upload — completed in under six hours.

MongoDB schema design with geospatial indexing#

The database is MongoDB, chosen for its flexible document schema (which accommodates the varied and sometimes inconsistent heritage data without rigid migrations), its native geospatial indexing, and its natural fit for the nested data structures in each heritage record — arrays of themes, functions, and images with sub-objects.

The data lives in two collections: one for English records and one for French, linked by a shared source ID that corresponds to the original Parks Canada place ID. Each document stores the complete heritage profile plus derived fields: the generated slug, parsed city and province, GeoJSON coordinates, S3 image URLs, and a vector embedding for semantic search.

Three indexes per collection make the queries performant at scale: a unique index on slug for URL lookups, a unique index on source ID for cross-language joins, and a 2dsphere index on the location field for geospatial queries. The geospatial index powers both the Near Me feature and proximity-sorted search results.

French content does not duplicate images. When the server needs to return French data with photographs — for search results, featured sites, city pages, or related places — it joins with the English collection by source ID to retrieve the image array. This keeps the French collection lean while maintaining a complete bilingual experience.

React server-side rendering with 27,500 prerendered pages#

The frontend is React with server-side rendering via Express. On each request, the server determines the language and slug from the URL, fetches the relevant data from MongoDB, renders the React component tree to an HTML string, and injects it into the page template along with the serialized data for client-side hydration.

For production, the entire site is prerendered to static HTML. A build script launches the SSR server and crawls every page with headless Puppeteer — every heritage site in both languages, every city page, every topic page, and every static page. That is over 27,500 pages. The output is a directory of flat HTML files that nginx can serve directly, with zero rendering overhead on each request.

This split architecture is the key to running on a Raspberry Pi. The vast majority of page loads are served as static files. The Node.js API server only handles the operations that genuinely need server logic: search, autocomplete, semantic search, nearby queries, and the contributor system.

AI-powered semantic search with vector embeddings and HNSW#

The original historicplaces.ca offered basic keyword search. Multi-word queries had to appear consecutively and in exact order. There was no relevance ranking. Heritage Guide replaces this with a three-tier search system.

The first tier is autocomplete — as the user types, pattern matching against name, city, and address returns suggestions in real time. The second tier is keyword search with province filtering, pagination, and optional geolocation. When the user shares their location, results are sorted by distance using MongoDB’s geospatial aggregation pipeline.

The third tier is semantic search. At build time, a script generates vector embeddings for every record in both languages using OpenAI’s embedding model. Each record’s embedding is assembled from the place name, city, province, description, and heritage value statement — giving the model a rich representation of what the site is and why it matters. The embeddings are stored directly in MongoDB.

At server startup, all embeddings are loaded into in-memory HNSW indexes (one per language) for approximate nearest neighbor search. HNSW provides sub-millisecond query times with high recall using cosine similarity. When a user searches for a concept — “fur trade” or “Victorian architecture” or “wartime industry” — the query is embedded in real time and the index returns the most semantically similar heritage sites. The user does not need exact keywords. They can search by meaning.

The search page routes intelligently between tiers: text queries go to semantic search for conceptual matching, while location-enabled queries go to the geospatial keyword search for proximity-based results. The user does not need to understand this distinction.

The same embedding infrastructure powers the related places feature on each heritage site page, surfacing connections that would be invisible to keyword matching — a fortification in Ontario appearing as related to a military installation in Quebec because their heritage value statements describe similar historical significance.

Bilingual English-French web application architecture#

Canada is a bilingual country, and any credible replacement for a federal heritage database must work fully in both English and French. Bilingual support is implemented at every layer.

English routes use no URL prefix. French routes use /fr/. The server determines the language from the path and routes to the appropriate database collection. A React context provides the current language to the component tree along with a translation function, localized field accessors, and locale-aware formatting. Every content page contains both English and French static text, selected at render time.

Cross-language navigation uses the shared source ID. When a user switches languages, the application finds the corresponding slug in the other collection — a single database query. The language toggle preserves the user’s position in the site. The SEO layer generates proper hreflang alternate links and bilingual sitemap entries for every page, ensuring search engines understand the relationship between language versions.

Interactive heritage map with MapLibre GL and client-side filtering#

The interactive Explore Map renders all 13,554 heritage sites on a single map using MapLibre GL. The map uses a custom vector tile style with a heritage-appropriate palette — parchment backgrounds, gold major roads, and burgundy highways.

Rendering that many markers simultaneously requires careful data management. At build time, a script generates GeoJSON files for both languages with filter metadata embedded in each feature’s properties: construction year, recognition year, function category, and theme category. All filtering happens client-side against this pre-generated dataset. A filter panel offers range sliders for construction period and recognition date, plus dropdowns for province, function, and theme. As filters change, MapLibre filter expressions update instantly — no server round-trips involved. A live count of visible sites updates in real time. This approach keeps the map responsive even on the Raspberry Pi, since the server is never touched.

Community contributor system with moderated edits#

Heritage data is not static. Buildings get renovated, demolished, or repurposed. New sites get designated. Existing records contain errors. Heritage Guide includes a contributor system that creates a path for the heritage community to maintain the data over time.

Registered users can submit edits to place data — text corrections, new photographs (uploaded directly to S3), and location corrections via an interactive map picker. Each submission enters a review queue with a before-and-after diff. Admins review, approve, or reject edits through a dashboard. This ensures data integrity while lowering the barrier for heritage professionals to contribute their expertise without needing direct database access.

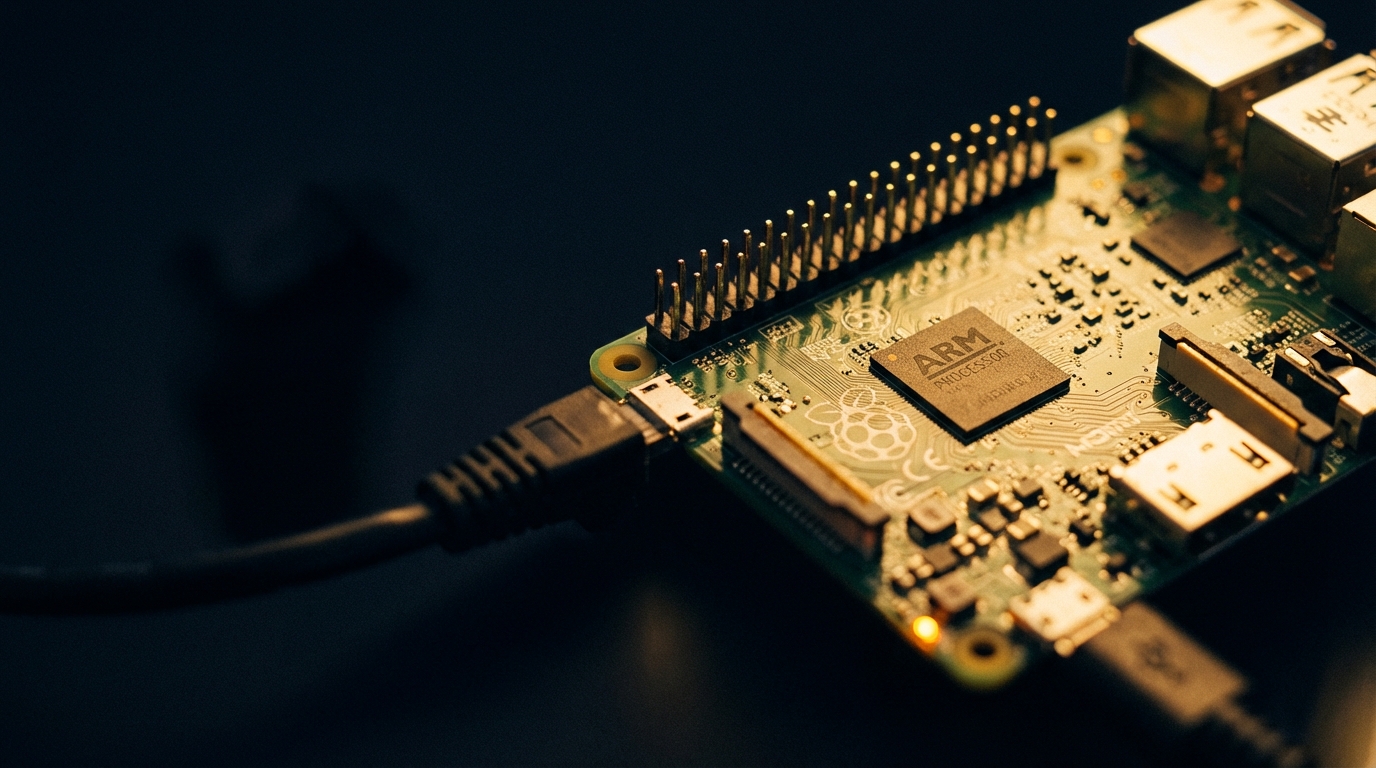

Deploying a full-stack application on a Raspberry Pi#

The production deployment runs on a Raspberry Pi. The architecture is designed to minimize the computational load while maintaining the full feature set.

MongoDB runs in a Docker container on the host. The API server runs in a separate container, built for ARM64. Nginx serves the 27,500+ prerendered static HTML files for all initial page loads and reverse-proxies API requests to the Node.js container. This means the Pi’s CPU is almost entirely idle during normal browsing — nginx serving flat files requires negligible resources. The Node.js process only activates for search queries, autocomplete, and contributor operations.

Images are hosted on S3 rather than served from the Pi, keeping bandwidth manageable. The HNSW vector indexes load into RAM at startup — the full set of embeddings for 13,554 records in both languages fits comfortably in memory alongside everything else.

The result is a complete heritage database application — bilingual, semantically searchable, geographically explorable, community-editable — running on hardware that costs less than dinner for two. The power draw is measured in single-digit watts. Parks Canada said the original site was too technically complex and costly to maintain. The technical complexity argument does not survive contact with the deployment details.

AI-assisted development with Claude Code#

The entire application was built with Claude Code from a specification document describing the target architecture, the data model, the bilingual requirements, the search system, the SSR pipeline, the map features, the contributor system, and the deployment constraints. Claude Code generated the scraping pipeline, the database layer, the React frontend, the API endpoints, the search implementations, the map integration, the bilingual system, the authentication layer, the prerendering pipeline, and the Docker configuration.

This is not to say the process was fully automatic. The specification itself required significant thought about architecture, data modeling, and user experience. The generated code required review, testing, and iteration — particularly around bilingual data handling edge cases, geospatial query tuning, and prerendering concurrency management. But the core velocity — from specification to working application in under 24 hours — was a direct consequence of being able to describe the system at a high level and have Claude Code handle the implementation.

The speed mattered. This was not an academic exercise. There was a national heritage database about to be decommissioned, and the data needed to be captured and made accessible before the shutdown deadline. Claude Code turned what would have been a multi-week project into a weekend sprint. The result is a production application serving real users, covered by national media, and running on a Raspberry Pi.

Heritage Guide is live at heritageguide.ca. The source of the heritage data is the Canadian Register of Historic Places. The project is an independent initiative with no affiliation with Parks Canada or the Government of Canada.